Introduction/Background

51 Pegasi b, the first exoplanet discovered around a main-sequence star in 1995 [1], surprised astronomers because it's a planet that does not look like any planet in our solar system. People call it a "hot Jupiter", based on its orbital period and mass. This is not the only exotic exoplanet people have found. Since then, approximately 6,000 exoplanets [2] have been discovered, and many of them still lack counterparts to our solar system's planets. The "period, mass" classification scheme is straightforward, but it only accounts for two features of the planets. Until today, no standardized exoplanet classification scheme has been widely accepted. Therefore, we want to use unsupervised ML to create a standardized classification algorithm for exoplanets.

The main dataset we will use is the Kepler Objects of Interest (KOI) table. It is built on planet transit data from the Kepler telescope. The KOI database is a good start for our unsupervised learning.

It is also notable that the KOI dataset has classified planets as confirmed, candidate, and false positive. This is where we want the supervised learning model to work on. There has been extensive work on using ML, including CNNs and transformers[3][4], to identify false positives. We aim to reproduce and improve on these results.

Dataset Links

Problem Definition

How to use ML to discover subgroups of exoplanets? How can ML help identify more exoplanet candidates or false positives? The confirmation of exoplanets, and also the classification of exoplanets, has long been a subjective and case-by-case job. This calls for the use of ML algorithms to group planets by statistics when little physics prior knowledge can be provided, and identify false positives when subsequent observations are not yet available.

Methods

Data Preprocessing

To prepare high-quality training and test data, we will follow a three-step process: data cleaning, data transformation, and data reduction.

We will begin with a primary screening of the dataset, removing missing and irrelevant rows or columns, as well as duplicated records. Next, we will transform the data. For categorical features, one-hot encoding will be applied. For numerical features, we will use standardized normalization to ensure consistent data scales and improve optimization efficiency during training. For the light curve data, we will slice the transit light curves into segments that contain only 500 points with fixed cadence. Since light curve data is high-dimensional, we will consider extracting key features (such as the median, mean, and standard deviation) to reduce dimensionality.

Unsupervised Machine Learning

We plan to evaluate Gaussian Mixture Models (GMM) and DBSCAN. GMM is flexible and also works well for clusters of various shapes. DBSCAN is a density-based method that does not require a predefined number of clusters.

By comparing the different approaches, we aim to identify meaningful subgroups within the exoplanet dataset, as well as analyze the insights and characteristics of different clusters.

Supervised Machine Learning

The objective of the supervised machine learning method is to train a model capable of accurately classifying candidate exoplanets and false positives. This is a binary classification problem. We will evaluate Logistic Regression, Decision Tree, Random Forest, and Neural Networks.

Logistic regression is a simple yet robust classification algorithm. It can be used as the baseline for the classification problem. Tree-based methods can reveal feature importance and increase the model interpretability. Neural networks offer high flexibility of discovering patterns in high-dimensional data. It can be powerful for enhancing model accuracy. Moreover, with the neural networks, we will be able to directly use light curve data as the input and train the model to identify false positives.

Exploring these algorithms enables us to uncover hidden characteristics that differentiate observed exoplanets from false positives.

Potential Results and Discussion

Clustering (Unsupervised) Evaluation Metrics

We will use the internal evaluation metrics[5][6], which assess the quality of clustering based solely on the data itself, such as the Dunn Index (identifies cluster tightness), Silhouette Coefficient (evaluates similarity of an object to its cluster and to other clusters), and Davies-Bouldin Index (evaluates the similarity between clusters and how sparse/distinct they are to each other).

Expected results: Recover basic classification for exoplanets, potentially discover subgroups.

Supervised Learning Metrics

In supervised learning evaluation, a labeled dataset is available, allowing for a direct comparison between the predicted and true labels [7][8]. The model's performance is assessed using a variety of metrics, including Accuracy, Recall (or sensitivity), Precision, F1 score, ROC-AUC and the Confusion Matrix.

Expected results: Reaching a 0.8 accuracy for the best model.

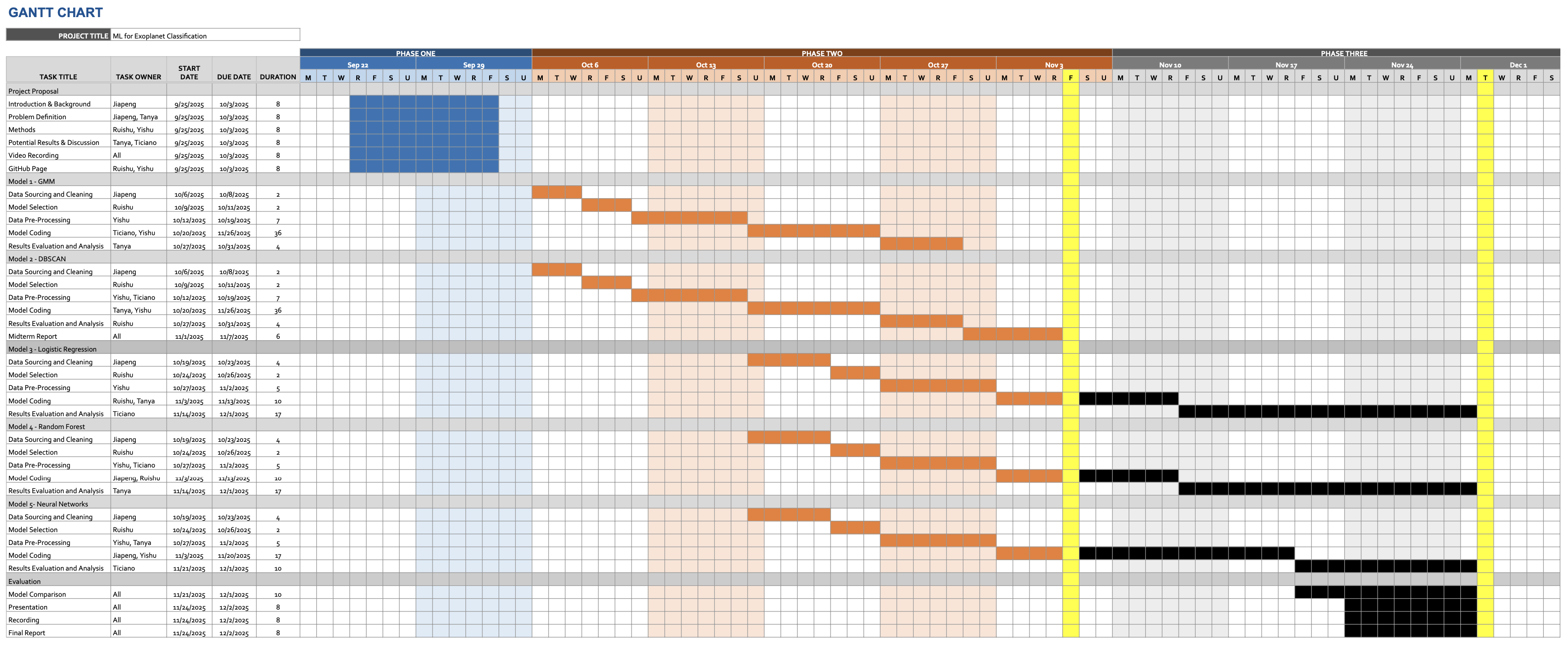

Gantt Chart

Contribution Table

| Name | Proposal Contributions |

|---|---|

| Jiapeng Gao | Introduction, Problem definition, Proposal writing, Presentation |

| Ruishu Cao | Methods, Website, Proposal writing, Presentation |

| Tanya Chauhan | Evaluation metrics, Proposal writing, Presentation |

| Melvin Ticiano Gao | Evaluation metrics, Video recording and editing, Proposal writing, Presentation |

| Yishu Ji | Methods, Website, Proposal writing, Presentation |

References

- [1] Mayor, Michel, and Didier Queloz. "A Jupiter-mass companion to a solar-type star." nature 378.6555 (1995): 355-359.

- [2] Christiansen, Jessie L., et al. "The NASA Exoplanet Archive and Exoplanet Follow-up Observing Program: Data, Tools, and Usage." arXiv preprint arXiv:2506.03299 (2025).

- [3] Choudhary, Anupma, et al. "Exoplanet Classification through Vision Transformers with Temporal Image Analysis." arXiv preprint arXiv:2506.16597 (2025).

- [4] Rafaih, Ayan Bin, and Zachary Murray. "Detecting False Positives With Derived Planetary Parameters: Experimenting with the KEPLER Dataset." arXiv preprint arXiv:2508.13801 (2025).

- [5] J.-O. Palacio-Niño and F. Berzal, "Evaluation metrics for unsupervised learning algorithms," arXiv preprint arXiv:1905.05667, 2019.

- [6] Xu, Dongkuan, and Yingjie Tian. "A Comprehensive Survey of Clustering Algorithms." Annals of Data Science, vol. 2, no. 2, 2015, pp. 165-193.

- [7] Karimi, Reihaneh, et al. "Machine Learning for Exoplanet Detection: A Comparative Analysis Using Kepler Data." arXiv, 2025, https://doi.org/10.48550/arXiv.2508.09689

- [8] Airlangga, G. (2024). Exoplanet classification through machine learning: A comparative analysis of algorithms using Kepler data. MALCOM: Indonesian Journal of Machine Learning and Computer Science, 4(3), 753-763.